DevOps Concepts #2: Autoscaling

Why do we need autoscaling and a short story how we worked without it using Docker Swarm. Learn about Auto scaling on AWS and Auto Scaling Groups

You launch an application, and overnight, it starts getting huge traffic. The number of users skyrockets.

But will your application hold up? Autoscaling allows you to automatically adjust your resources, whether it's scaling up during peak hours or scaling down during calm times, ensuring your application remains performant and resilient.

Today, we are going to speak mainly about Auto Scaling as a concept and Auto Scaling Groups.

Short story

I remember a few years ago, we were working on one application that nobody expected to catch traffic any time soon. It was in development for a long time, and of course, it was hosted on one Digital Ocean server.

Back then, we used Docker Swarm for some applications, yeah, I know, it’s a weird technology to be used for the app. But those were weird days, and it worked.

You might be asking what is Docker Swarm, it’s a way to run your docker containers in some sort of cluster mode using docker-compose.yml.

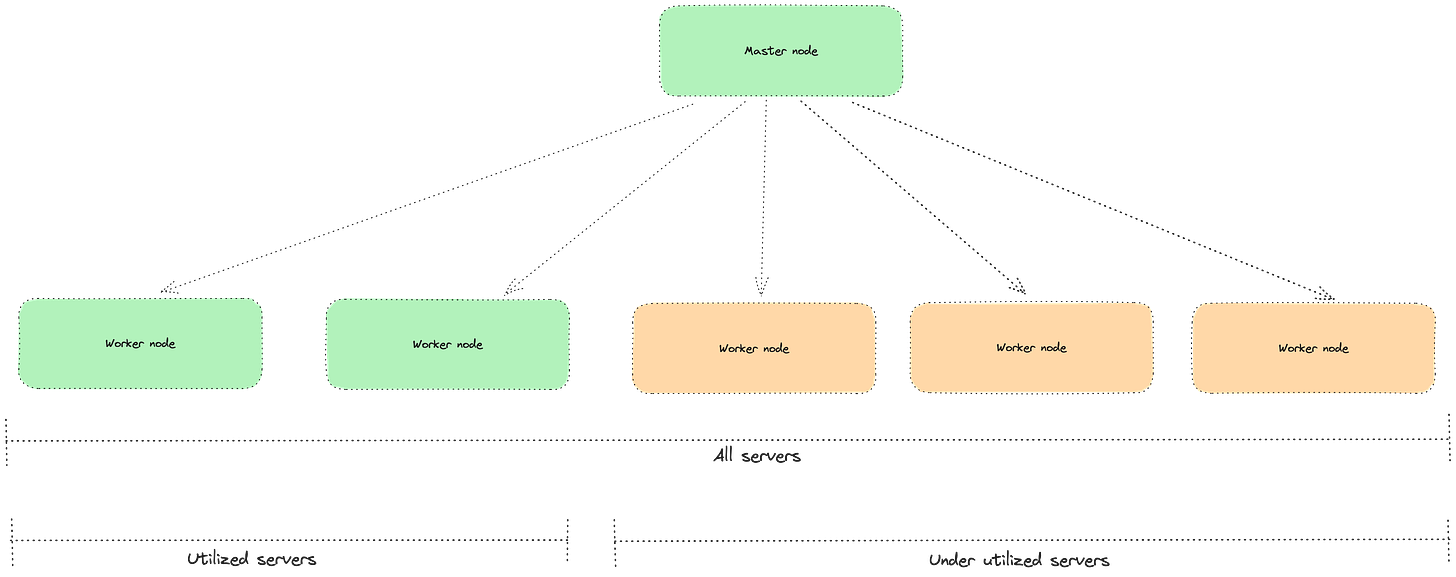

But you take care of all your nodes and how they are distributed. There is:

Master node - takes all the traffic and distributes it

Worker node - holds one or multiple docker containers and handles the traffic that is sent from the master

You can already see the bottlenecks in this type of architecture, it’s not Cloud based of course. It all works until you receive the burst of traffic, then if you don’t have enough resources to serve all your users.

Plus, if you need to share the same file system with Docker Swarm, you need to use some sort of GlusterFS in order to share files between nodes. And it just adds up to everything because you have multiple workers who all connect to the master which holds your files. Ideally, you should use some Object storage like S3 or EFS from AWS instead of doing it on your own, it’s painfully hard.

How do you scale up during peak hours? Well, you do it manually.

The engineer tries to update the resources and join them into the network. But in order to deploy to them, he needs to redeploy the entire app which causes downtime.

It eventually starts working and serving the users, until the next peak hours happen and it again exceeds all resources.

You can buy more servers upfront but in those situations, you end up with underutilization. You pay for the resources you don’t use all the time. That’s a reason why the entire serverless movement happened, pay for what you use.

But it works, it solves your issues until it uses all resources. You design for the worst possible scenario based on your experience with previous traffic spikes.

At some point, Docker Swarm was a viable solution, and I think it’s still an ok solution for some small apps, and if you don’t have too much complexity.

When you manage the server on your own, you have to take care of patching and security updates, otherwise, you can leave a giant hole in your system. Over time, Cloud always wins for me.

Autoscaling

With Autoscaling in the Cloud, you can scale up and scale down as you go. You don’t need to wake up your engineer to add more servers and connect them.

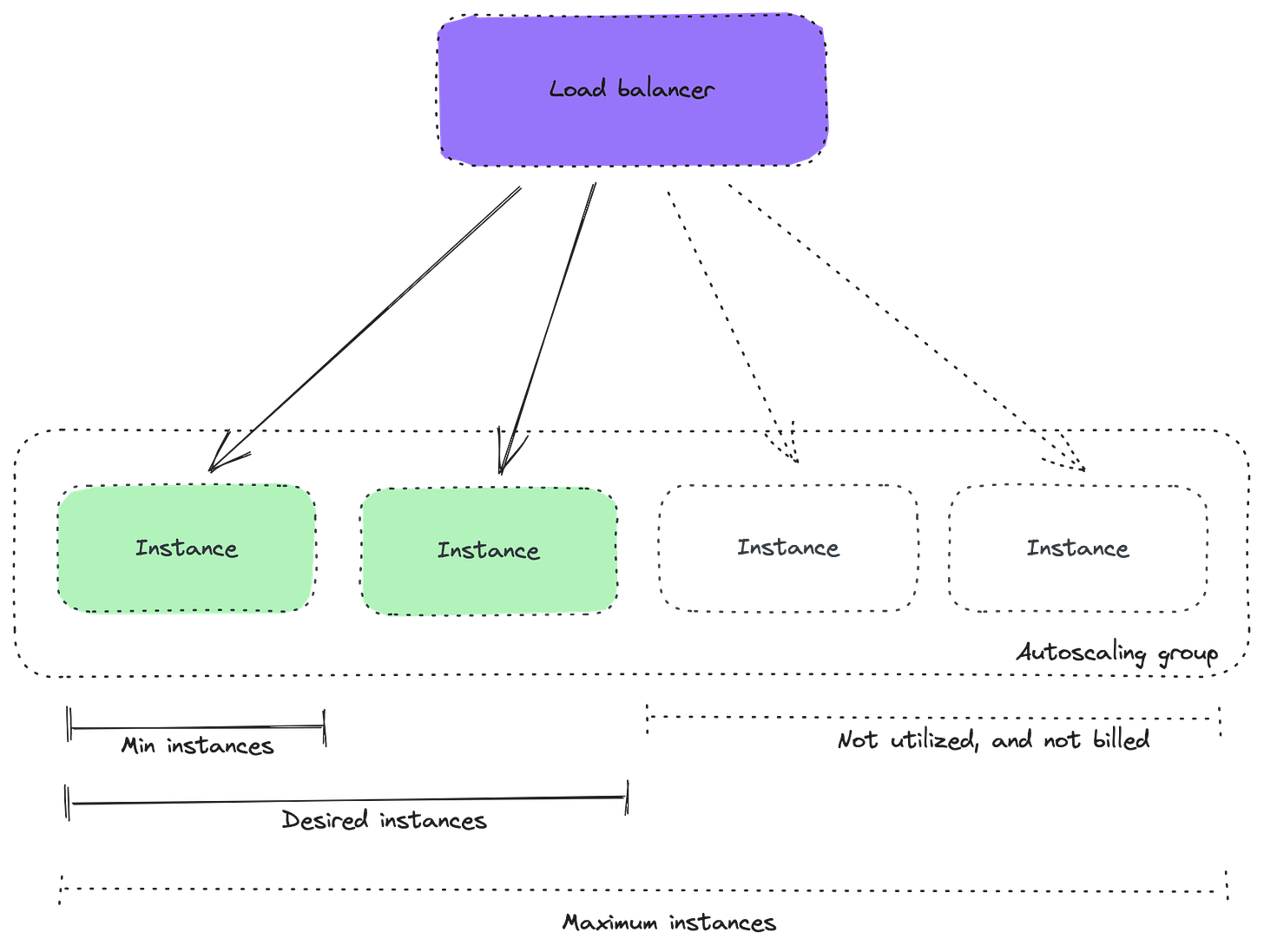

In AWS you can do this by using something that’s called Auto Scaling Group. You define what kind of resources you expect and limits:

Minimum instances - this is the capacity that you want to keep all the time.

Desired instances - when you are scaling up/down you increase/decrease desired capacity, and then the auto-scaling group will add/remove instances based on your configuration

Maximum instances - is the maximum capacity to which your autoscaling group can go, it won’t go over that number.

As you look at the above image, it’s exactly what we were doing, except that you don’t need to buy servers upfront at all. You can request them from AWS as you need them.

Although AWS still allows you to purchase those servers upfront, if you know that you want them, you can buy Reserved Instances for a year and get a big discount. And in case you need more servers, you can always request them on demand, but the price is higher. We will cover the costs of these solutions one time.

Still, we didn’t answer one of the most important questions. What happens with our sleepy engineer? Does he still wake up to scale things up?

There are a few ways to achieve this, one of them would be based on the schedule. If you have predictable traffic and know that at 9 AM New York time, you will have an increase in traffic, you can schedule to add more servers at that time.

And this is done and handled automatically by AWS. So your engineer can keep sleeping and wake up just before standup.

But what about other use cases? What if traffic suddenly starts coming during the night? Ideally, you would scale based on the demand.

There are a few possible strategies you can use with AWS Auto scaling groups:

Simple scaling

It’s an easy one, and you can use a couple of metrics to scale.

You can define two types of metrics:

Scale-out - add instances if the threshold is elevated for a period of time. For example, add 2 more instances if the CPU utilization is over 80%.

Scale-down - remove instances if a certain threshold is under a certain threshold for a period of time. For example, remove 1 instance if the CPU utilization is below 30%.

This way, you can add some cost optimization and react to the traffic at the same time. If your server is underutilized, you remove one and let it be handled by fewer servers since they can probably handle it.

Target scaling

It’s all oriented to the Average values of the entire group, and maintaining that average value. You can play with predefined values on AWS like:

Average CPU Utilization

Average received/sent bytes

Average Request Count of the entire group

As you can see it’s a different tactic from simple scaling, because, here you don’t define when to add/remove instances. You let AWS handle it, and you just define what’s the threshold, it will handle the rest.

It also has some cooldown period, for example, if CPU utilization is over 70% for 2 minutes, you can add instances, and if it’s below 70% you can remove instances.

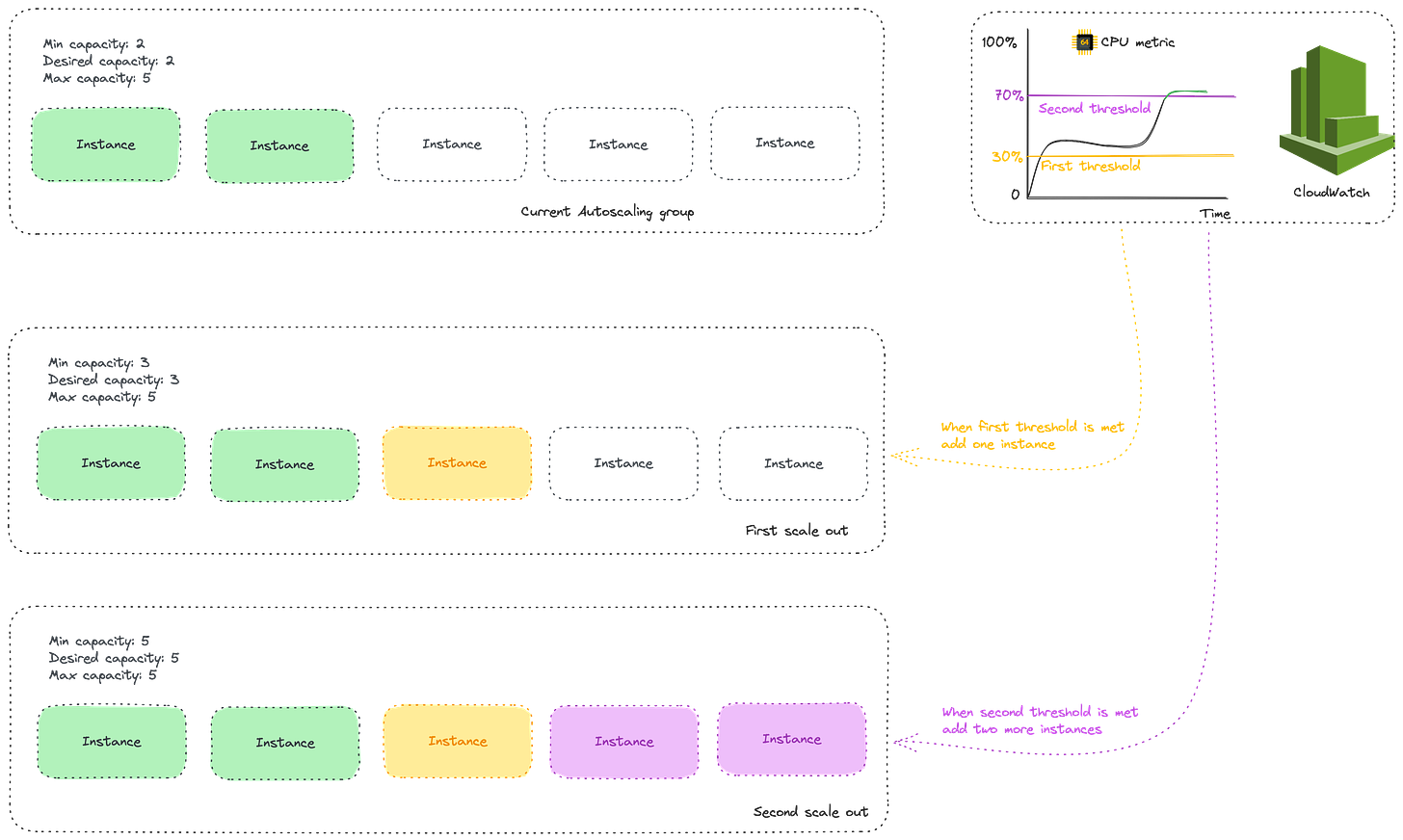

Step scaling

It improves what simple scaling does, but it adds steps to it. You can define how much to scale out based on different thresholds.

With it, you define for example that if CPU utilization is over 30%, increase the number of instances by 20%. And if CPU utilization is over 70% you add 50% of instances to the current group.

This way, you can react to the metrics based on the current load, it’s good because if the app is nearing the top utilization you probably need more instances like in the example above.

Launch Templates

When you work with Auto Scaling, you need to provide it with what you expect it to run for you.

AWS still supports Launch configuration but it’s getting deprecated. And back in the days, we would be using Ansible scripts to run and install all required packages on the new server. It was a nice way to automate things.

In the example above, Docker Swarm is also a good example, it allows us to provision new resources based on the Docker Image. But you cannot provision the entire server with it, only new instances in the network.

With Launch Templates, we define what kind of AMI (Amazon Machine Image) we want to use, so you can build one of your own, or create it from EC2 instance. And based on that create an auto-scaling group.

End

I feel nostalgic about Docker Swarm days, it was simple architecture. But I’m grateful that we can have solutions like autoscaling groups from AWS where most things can be taken care of for us, and that we don’t need to add manually servers into the entire infrastructure.

Hope this helps you understand the Autoscaling idea, and how it’s done in the Cloud. It’s an important topic to understand even for engineers, so you can communicate efficiently with other engineers and DevOps engineers.

In the end, we want to hear from you, let us know what you think about today’s newsletter. It’s a short pool, 10 seconds maximum. Rate us, so we provide you with more value.